On April 6th at 01:20:51 UTC I pasted three sentences into a text box. Thirteen minutes and fifty‑four seconds later, the whole app had been made mobile‑friendly — seven subtasks, six concurrent git worktrees, one PWA service worker, all written, reviewed, and merged to main without me touching anything. The task I filed was RAI-436, and its full input, verbatim:

The whole app needs to better support mobile. Test and validate the assistant and admin pages, for mobile (both browser and in the future PWA compliant). Currently it's ugly and more desktop centric.

198 characters. Two sentences of grievance, one capability request. That's the interface. Raiter is a self-hosted AI agent orchestrator — Go backend, SvelteKit frontend, one Docker container behind Tailscale — and almost every task it has shipped for me started as a couple of sentences pasted into that box. This post is about the four things that make that possible: decomposition, parallelism, the git worktree plumbing that keeps parallel agents from stepping on each other, and a multi‑model provider chain that picks a different model for each kind of job.

Decomposition: how "ugly and desktop centric" becomes a work plan

The triager read RAI-436 and routed it to the architect. Three minutes and forty‑eight seconds later, the architect had produced this:

- Establish shared mobile CSS foundation — designer

- Root nav bar mobile collapse — designer

- Admin tasks kanban mobile layout — designer

- Chat page mobile touch targets and input polish — designer

- Admin agents and providers mobile polish — designer

- PWA service worker for offline shell caching — worker

- Mobile validation pass — architect

Seven subtasks, three different roles. Five designers handling page‑level restyles. One worker for the PWA service worker, because that's JavaScript plumbing and not a CSS job. And one architect at the end — the "mobile validation pass" — gated by blocked_by on every other subtask in the list. If the validation pass finds the earlier work didn't actually produce a mobile‑usable app, it re-enters the architect role and decomposes the gap. It's a self‑policing plan.

I didn't ask for any of this shape. I didn't say "first do shared CSS, then the pages in parallel, then a validation pass, and put a PWA service worker in there while you're at it." I said the app was ugly on mobile. The architect's job description is producing a JSON list of subtasks with roles and blocked_by edges, and the orchestrator gives it nothing else to do — no tools, no file access, no ability to write code, just decompose. That constraint is what makes it good at it.

This is the part of agent systems I think is most undersold. Not the LLM. The pipeline. A model can one‑shot a component. It cannot, in general, look at a vague grievance and produce sensible unit‑of‑work boundaries that other agents can execute in parallel without stepping on each other. The decomposition is a separate job, done by a separate role, with a separate prompt, and its output goes straight into SQLite where the rest of the pipeline picks it up.

Parallelism: six worktrees, one repo, no lost work

All seven subtasks of RAI-436 were dispatched at the same second: 2026-04-06T01:24:39Z. The PWA service worker finished first at 01:26:12 — 93 seconds after dispatch. The shared mobile CSS foundation finished 47 seconds after that. The five page‑level designer tasks ran concurrently in five separate git worktrees and were all done within eight minutes. The architect's validation pass gated on everything and closed the parent task out at 01:34:45. Total wall time from "the whole app needs to better support mobile" to merged on main: thirteen minutes and fifty‑four seconds.

On its peak day so far, Raiter completed 124 tasks. The database has authored 276 tasks through Codex, 10 through Claude, and 9 through Gemini — the Codex skew is the cost rebalance showing up in the data (more on that below).

The parallelism isn't impressive because it's fast. It's impressive because it works at all. Six workers in six git worktrees, writing to the same repo, rebasing on each other as main moves, without losing work. Serial execution of RAI-436 would have been seven round‑trips through the reviewer, one at a time, each blocking the next — closer to forty minutes than fourteen. For a single‑user system like this, parallelism is the difference between "I'll check on it later" and "I'll wait for it."

The worktree lifecycle is where the real bugs lived

Concurrent agents need isolated workspaces. Raiter gives each task its own git worktree — check out a task branch cheaply, let the agent write and commit, then tear it down. That's the one‑sentence version. The real version is 30+ commits fixing things I didn't know could break.

Stale state. When an agent process dies — rate limit, OOM, a Go panic in the orchestrator, the host rebooting — it leaves tombstones. A .git/refs/heads/task/RAI-123.lock file says another process is writing to that ref. Metadata in .git/worktrees/RAI-123/ says the worktree is alive when the directory is already gone. The next dispatch for that task hits one of these artifacts and refuses to create the worktree. The fix is a self‑healing Create: sweep stale locks, detect orphan metadata, and if git worktree add still fails on an existing branch, git branch -D it and recreate from main. Nuke and recreate isn't elegant, but neither is a pipeline that stops because of a lockfile from yesterday.

Silent work loss. Before a subtask goes to review, the orchestrator rebases its worktree onto the current main so the reviewer sees a diff against real head. For a while that rebase used git rebase -X theirs, which looked like the right thing — "on conflict, take the incoming side." It was backwards. In a rebase, "theirs" is the branch being rebased onto, not the feature branch, so any time two workers touched the same file, -X theirs silently dropped the agent's work. The reviewer would see a no‑op diff, approve it, and the merge queue would cheerfully ship nothing. The fix was a one‑line removal of the flag and then several more commits to make the rebase best‑effort: if it conflicts, log the conflict, leave the worktree on its old base, and let the reviewer see the stale diff instead of a truncated one. Loud failure beats quiet data loss every time.

Permissions that lie. The orchestrator runs in the container as root. Agent subprocesses run as a non‑root agent user, because giving Claude Code and Codex --dangerously-bypass-approvals-and-sandbox together with root felt irresponsible. Worktrees get created by root, then the agent needs to write to them. chown -R agent works on a native filesystem. On a Docker bind mount it silently no‑ops and returns zero — the kernel won't let the container change UIDs on the host volume, but the syscall doesn't error. The agent writes, gets EPERM, its commit fails, the task errors out, and nothing in the logs points at chown. The fix was to stop trying to take ownership and use chmod -R a+rwX at every worktree entry point. World‑writable inside a single‑user container is fine. Nothing else has access to that volume.

Three bugs, each one invisible to the AI layer. The model wasn't wrong. The plumbing was. The orchestration layer is harder than the AI layer, and I don't think that ratio is ever going to invert.

Multi‑model orchestration

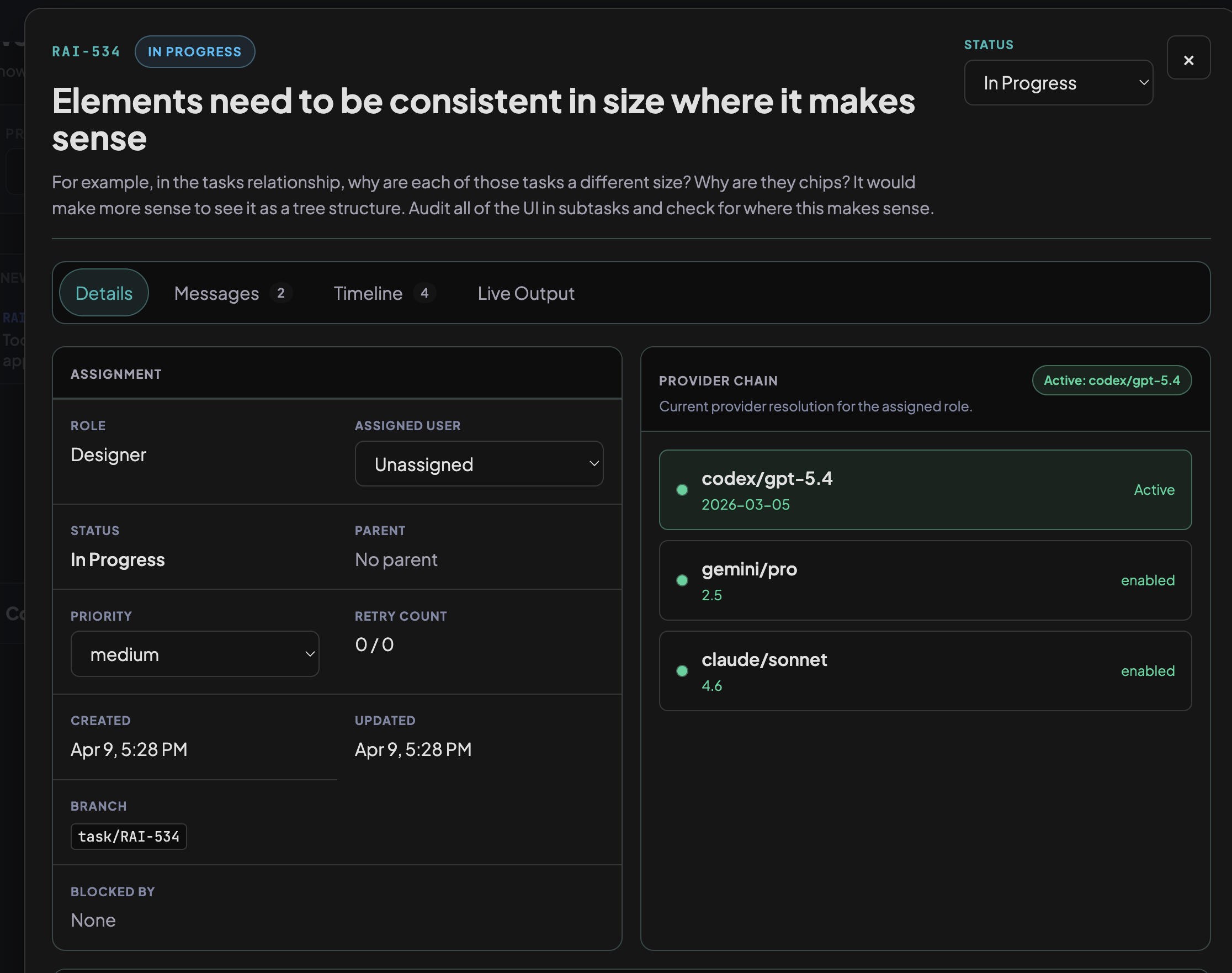

Eight roles, three providers — but not evenly. The provider chains fall into three tiers based on what the role actually does:

Routing tier (triager, ops): Gemini Flash → Codex mini → Claude Haiku. These roles classify tasks or handle small work. They don't write code, they don't do deep reasoning. Cheapest‑first makes sense; the Claude backstop is Haiku, the cheapest option that still keeps the chain diverse across providers.

Chat tier (assistant): Codex mini → Gemini Flash → Claude Sonnet. Similar cost profile to the routing tier, but the Claude backstop is Sonnet instead of Haiku. The reason: the assistant talks directly to the user, and fallback quality matters more when a human is waiting for a response.

Heavy‑work tier (worker, designer, reviewer, SRE): Codex gpt-5.4 → Gemini Pro → Claude Sonnet. These are the code‑writers and reviewers. For most of Raiter's life, Claude Opus led these chains — then migration 028 ("rebalance_provider_chains_for_cost") moved Codex gpt-5.4 to primary after it became good enough at code generation. SRE is the only role in this tier whose Claude backstop is Opus instead of Sonnet: if both cheaper providers have failed during an incident, you want the strongest reasoner, not the cheaper one.

Then there's the architect, which is the exception to all of this. It's the only role where Claude Opus is still primary — Codex and Gemini are the fallbacks. Migration 029 deliberately skipped the architect when rebalancing everything else for cost. Architecture and decomposition need the strongest reasoner available, because getting the task breakdown wrong cascades through the whole pipeline. I'd rather pay for Opus on the decomposition step than save money there and have workers confidently building the wrong thing.

| Role | 1st | 2nd | 3rd |

|---|---|---|---|

| architect | claude opus 4.6 | codex gpt-5.4 | gemini pro 2.5 |

| worker | codex gpt-5.4 | gemini pro 2.5 | claude sonnet 4.6 |

| designer | codex gpt-5.4 | gemini pro 2.5 | claude sonnet 4.6 |

| reviewer | codex gpt-5.4 | gemini pro 2.5 | claude sonnet 4.6 |

| sre | codex gpt-5.4 | gemini pro 2.5 | claude opus 4.6 |

| assistant | codex gpt-5.4-mini | gemini flash 2.5 | claude sonnet 4.6 |

| triager | gemini flash 2.5 | codex gpt-5.4-mini | claude haiku 4.5 |

| ops | gemini flash 2.5 | codex gpt-5.4-mini | claude haiku 4.5 |

internal/agent/role.go.Codex is primary for five of eight roles. Gemini is primary for two (the cheapest tier). Claude is primary for exactly one (the architect). This distribution would have been inverted six months ago, when Claude Opus was primary for most roles. The chains are a living document — they reflect which model is currently best at which job, and they change when the answer changes.

Ease of use is the whole point

There's no CLI to learn. No YAML to write. No Jira to configure. There's a text box. You paste a gripe, a link, a one‑liner, a half‑formed idea. The triager classifies it, the architect decomposes it if it needs decomposing, workers and designers implement the pieces in parallel worktrees, the reviewer gates each one, and the merge queue rebases, tests, merges, and (if backend Go files changed) rebuilds the running binary and restarts itself. I have watched Raiter ship code to itself 27 times.

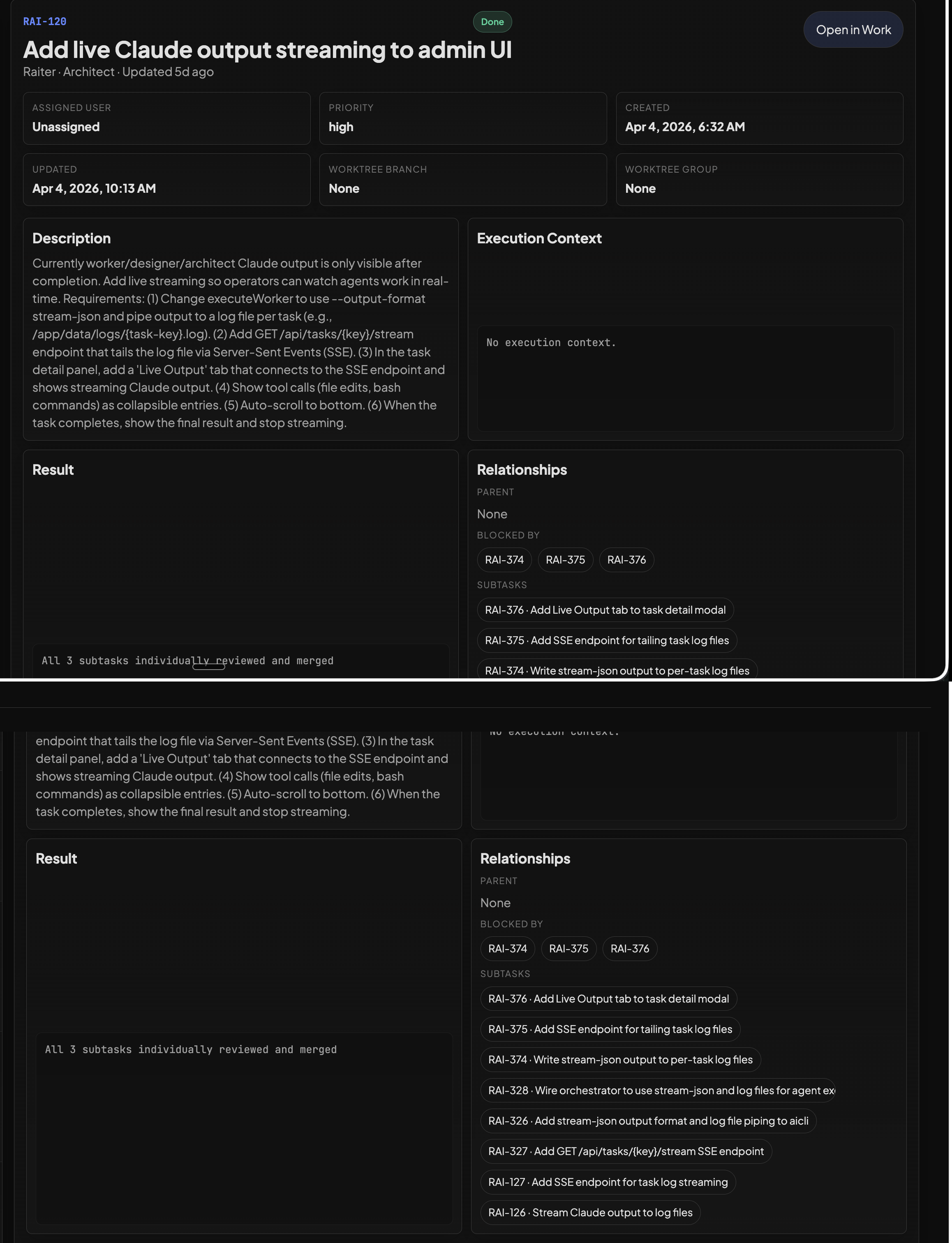

Every task in Raiter started this way. "tasks board is way too dense" → 6 subtasks. "providers should be more prominent" → 4 subtasks. "Task messsages" (sic, two s's) → 9 subtasks covering orchestrator plumbing, a task‑message helper script, Claude subprocess env var passing, real‑time streaming, and a live admin UI. None of those inputs were more than a couple of sentences. The pipeline did the rest.

Caveats

Every task in this post is on a codebase I wrote. All 463 completed tasks have been on projects where I have full context — mostly Raiter itself, plus a smaller Assistant project. The architect's decomposition works partly because I wrote the code it's decomposing, and the system prompts assume that familiarity. Pointing Raiter at an open‑source repo I've never touched is the next real test, and I expect the architect to struggle most. This is the most important caveat in the post.

There's also no cost tracking. I can tell you Codex authored 276 tasks and Claude authored 10, but I can't tell you what any individual task cost end‑to‑end. That's the biggest observability gap in the system.

Source

Raiter is a private project at chomey/raiter. All task IDs, timestamps, and numbers in this post are from the real production database as of April 10, 2026 — RAI-436 really did finish in thirteen minutes and fifty‑four seconds, and yes, the typo in RAI-103 ("Task messsages") is really in there. If you're working on agent orchestration or want to compare notes, I'm at jordan@fam.foo, and more posts land at fam.foo/aiblog.